Science

AI Thanabots: Innovation in Medical Education or Ethical Dilemma?

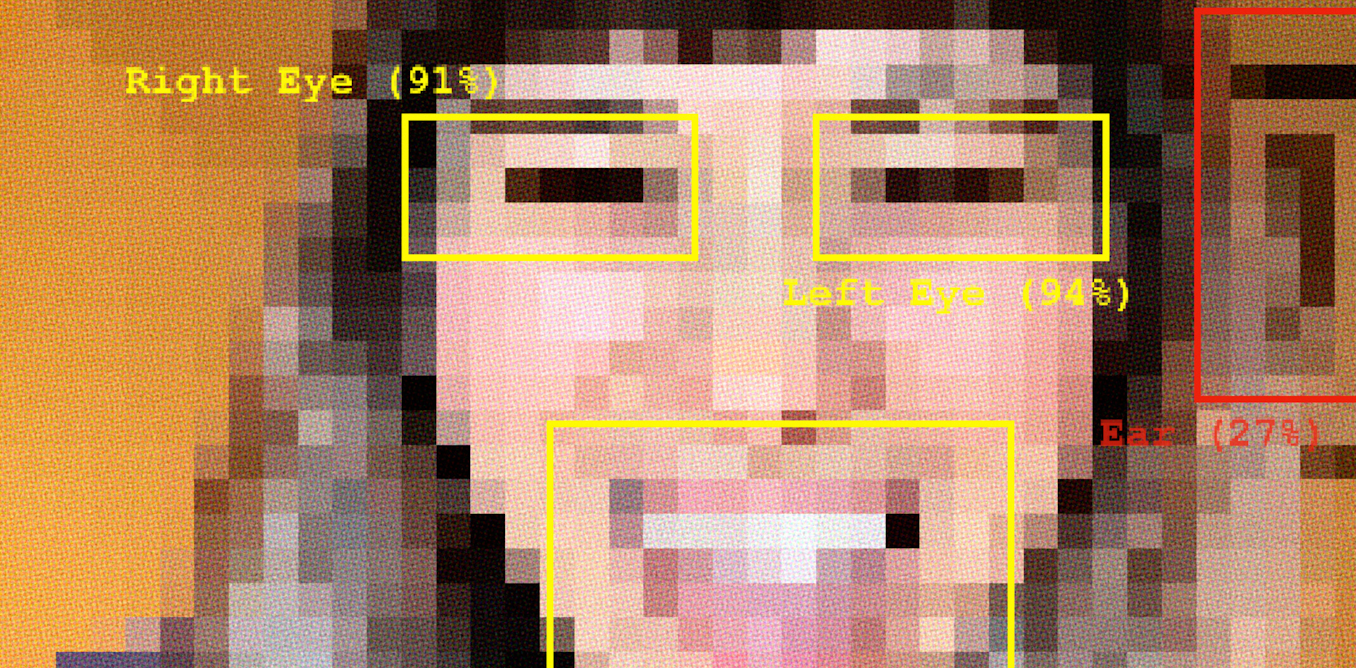

The emergence of artificial intelligence (AI) has sparked a debate about its potential applications in medical education, particularly through the use of digital replicas of deceased individuals, known as thanabots. These AI-driven entities are envisioned to enhance student learning by offering interactive experiences based on real-life medical histories. While this technology may revolutionize educational practices, it raises significant ethical concerns regarding the representation of death and the emotional implications for students.

Understanding Thanabots and Their Potential

The term “thanabot” derives from thanatology, the study of death, and refers to AI-generated representations designed to assist in learning and provide comfort to the bereaved. Current technologies such as Project December and Deep Nostalgia exemplify the growing acceptance of digital afterlives. These tools allow users to engage in text-based conversations with deceased individuals or animate old photographs, creating a sense of interaction with those who have passed on.

In the context of medical education, thanabots could support students during dissections by providing tailored guidance based on a donor’s medical records. This interaction could improve factual learning and foster skills such as empathy and professionalism. While the concept remains largely theoretical, the underlying technology is poised to become a reality.

The potential benefits of thanabots are substantial. By creating a simulated “first patient,” students may gain a deeper understanding of anatomy and develop emotional competencies crucial for their future careers. Yet, these innovations come with notable risks.

Ethical Risks and Cultural Considerations

The integration of thanabots into anatomy education presents a range of ethical and legal challenges. Currently, the frameworks governing their use are underdeveloped, leading to critical questions about ownership, consent, and privacy. For instance, in a situation where a thanabot is created from a deceased donor, who holds the rights to that digital representation? How will consent be obtained from families, and how will the dignity of the deceased be maintained?

Moreover, the emotional impact of interacting with a digital representation of a deceased individual cannot be underestimated. The potential for students to form unhealthy attachments to these AI constructs could overshadow the authentic experience of engaging with mortality. Cultural norms surrounding death vary widely; in many cultures, the dead are to be treated with utmost respect, and the idea of digitally resurrecting them may be considered taboo.

As the authors of a recent study highlight, the use of thanabots in educational settings can create confusion about the nature of death. The distinction between life and death may become blurred, leading students to develop a distorted understanding of what it means to be human. This emotional dissonance could hinder their ability to navigate real-life encounters with death and dying.

In conclusion, while the integration of AI into medical education holds promise, educators must proceed with caution. The allure of technological advancements should not overshadow the need for ethical governance and critical reflection on the implications of these tools. Before introducing digital “ghosts” into anatomy laboratories, it is essential to consider what they truly teach students about life, death, and human dignity.

The authors declare no competing interests and have disclosed no relevant affiliations beyond their academic appointments.

-

Top Stories3 months ago

Top Stories3 months agoCommunity Mourns Teens Lost in Mount Maunganui Landslide

-

Entertainment7 months ago

Entertainment7 months agoTributes Pour In for Lachlan Rofe, Reality Star, Dead at 47

-

World5 months ago

World5 months agoPrivate Funeral Held for Dean Field and His Three Children

-

Top Stories5 months ago

Top Stories5 months agoFuneral Planned for Field Siblings After Tragic House Fire

-

Sports7 months ago

Sports7 months agoNetball New Zealand Stands Down Dame Noeline Taurua for Series

-

Entertainment3 months ago

Entertainment3 months agoJulian Dennison Ties the Knot with Christian Baledrokadroka in New Zealand

-

Science6 months ago

Science6 months agoNew Research Reveals Simple Path to Enhanced Happiness

-

Entertainment6 months ago

Entertainment6 months agoNew ‘Maverick’ Chaser Joins Beat the Chasers Season Finale

-

Sports7 months ago

Sports7 months agoSilver Ferns Legend Laura Langman Criticizes Team’s Attitude

-

Sports5 months ago

Sports5 months agoEli Katoa Rushed to Hospital After Sideline Incident During Match

-

Sports6 months ago

Sports6 months agoAll Blacks Star Damian McKenzie and Partner Announce Baby News

-

Sports4 months ago

Sports4 months agoNathan Williamson’s Condition Improves Following Race Fall